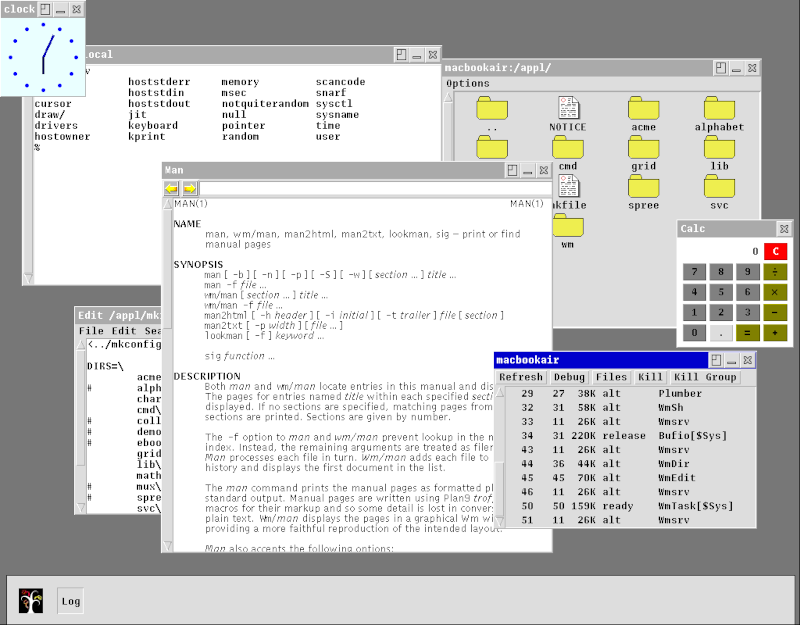

Fig 1. The Inferno64 graphical environment (wm) managing concurrent processes across the grid.

Inferno64 is a modern evolution of the classic distributed operating system. It provides a seamless, peer-to-peer compute grid designed to run on everything from modern desktops to reclaimed hardware. By networking together discarded phones, old laptops, and idle processors, Inferno64 creates a decentralized execution environment for distributed workloads and local AI hosting.

Notice: Inferno64 is an ongoing, highly aspirational research and development project. While core components like the Dis VM and basic networking are operational, features like the Llama.cpp fileserver, the Owen labor exchange, and the AMD64/ARM64 JIT are in active, heavy development. Expect sharp edges, frequent core dumps, and rapid architectural shifts. Contributors and fellow silicon recyclers are welcome.

llama.cpp, allowing grid nodes to read and write to large language models as if they were standard text files.While rooted in the elegant design of the original system, Inferno64 introduces critical modernization for today's hardware landscape:

| Feature | Original Inferno OS | Inferno64 |

|---|---|---|

| Target Architecture | x86, ARM (Early), MIPS | Modern ARM64, AMD64, Windows 11, macOS, Android/Termux |

| Execution Engine | Classic Dis VM | Dis VM with highly optimized AMD64 and ARM64 JIT Compilers |

| Tooling Ecosystem | Standard `acme` / shell tools | Includes a modern Limbo package manager, and a dedicated CLI debugger and tools for making limbo more legible to AI agents. |

Millions of capable processors sit idle in drawers across the globe. Inferno64 utilizes a peer-to-peer compute model to harness this wasted potential. A 2013 MacBook Air, a reclaimed Lenovo Ideacentre, and an old Android phone can be bound together using the Styx protocol into a single, cohesive computing fabric.

The Owen Labor Exchange: At the heart of the cluster lies the Owen labor exchange, serving as the dedicated control plane. Owen intelligently manages resource discovery across the network, schedules distributed workloads, and facilitates the token-based compute economy. Whether it is routing WASM payloads or distributing LLM inference tasks, the Owen exchange ensures processes are allocated to the most capable idle nodes efficiently.

Leveraging the native LLM fileservers, Inferno64 hosts a built-in AI coding agents capable of interacting directly with the distributed OS. Further automation is possible with a personal Claw: an autonomous AI agent framework designed to run on personal hardware and execute tasks across a user's digital life. It allows the agent to smoothly traverse filesystems, operate command-line tools, and orchestrate complex operations across the compute grid.

Security and containment are handled natively through Inferno's fundamental architecture. Because every process in Inferno constructs its own configurable namespace, the AI agent operates within a strictly defined reality. By simply omitting directories, network interfaces, or sensitive files from the agent's unique namespace, it remains perfectly sandboxed. It can only see, touch, and use the exact resources it has been explicitly granted.

To join the grid, pull the repository and build the host environment: